How to tell if AI threatens YOUR job

No, really, this post may give you a way to answer that

- Publish Date

- Authors

- Justin Searls

As a young lad, I developed a habit of responding to the enthusiasm of others with fear, skepticism, and judgment.

While it never made me very fun at parties, my hypercritical reflex has been rewarded with the sweet satisfaction of being able to say “I told you so” more often than not. Everyone brings a default disposition to the table, and for me that includes a deep suspicion of hope and optimism as irrational exuberance.

But there’s one trend people are excited about that—try as I might—I’m having a hard time passing off as mere hype: generative AI.

The more excited someone is by the prospect of AI making their job easier, the more they should be worried.

There’s little doubt at this point: the tools that succeed DALL•E and ChatGPT will have a profound impact on society. If it feels obvious that self-driving cars will put millions of truckers out of work, it should be clear even more white collar jobs will be rendered unnecessary by this new class of AI tools.

While Level 4 autonomous vehicles may still be years away, production-ready AI is here today. It’s already being used to do significant amounts of paid work, often with employers being none the wiser.

If truckers deserve years of warnings that their jobs are at risk, we owe it to ourselves and others to think through the types of problems that generative AI is best equipped to solve, which sorts of jobs are at greatest risk, and what workers can start doing now to prepare for the profound disruption that’s coming for the information economy.

So let’s do that.

Now it’s time to major bump Web 2.0

Computer-generated content wouldn’t pose the looming threat it does without the last 20 years of user-generated content blanketing the Internet to fertilize it.

As user-generated content came to dominate the Internet with the advent of Web 2.0 in the 2000s, we heard a lot about the Wisdom of the Crowd. The theory was simple: if anyone could publish content to a platform, then users could rank that content’s quality (whether via viewership metrics or explicit upvotes), and eventually the efforts of the (unpaid!) general public would outperform the productivity of (quite expensive!) professional authors and publishers. The winners, under Web 2.0, would no longer be the best content creators, but the platforms that successfully achieve network effect and come to mediate everyone’s experience with respect to a particular category of content.

This theory quickly proved correct. User-generated content so dramatically outpaced “legacy” media that the newspaper industry is now a shell of its former self—grasping at straws like SEO content farms, clickbait headlines, and ever-thirstier display ads masquerading as content. The fact I’ve already used the word “content” eight times in two paragraphs is a testament to how its unrelenting deluge under Web 2.0 has flattened our relationship with information. “Content” has become a fungible resource to be consumed by our eyeballs and earholes, which transforms it into a value-added product called “engagement,” and which the platform owners in turn package and resell to advertisers as a service called “impressions.”

And for a beautiful moment in time, this system created a lot of value for shareholders.

But the status quo is being challenged by a new innovation, leading many of Web 2.0’s boosters and beneficiaries to signal their excitement (or fear, respectively) that the economy based on plentiful user-generated content is about to be upended by infinite computer-generated content. If we’re witnessing the first act of Web 3.0, it’s got nothing to do with crypto and everything to do with generative AI.

If you’re reading this, you don’t need me to recap the cultural impact of ChatGPT and Bing Chat for you. Suffice to say, if Google—the runaway winner of the Web 2.0 economy—is legit shook, there’s probably fire to go with all this smoke. Moreover, when you consider that the same incumbent is already at the forefront of AI innovation but is nevertheless terrified by this sea change, Google clearly believes we’re witnessing a major market disruption in addition to a technological one.

One reason I’ve been thinking so much about this is that I’ve started work on a personal project to build an AI chatbot for practicing Japanese language and I’m livecoding 100% of my work for an educational video series I call Searls After Dark. Might be why I’ve got AI on the mind lately!

But you’re not a tech giant. You’re wondering what this means for you and your weekend. And I think we’re beginning to identify the contours of an answer to that question.

ChatGPT can do some people’s work, but not everyone’s

A profound difference between the coming economic upheaval and those of the past is that it will most severely impact white collar workers. Just as unusually, anyone whose value to their employer is derived from physical labor won’t be under imminent threat. Everyone else is left to ask: will generative AI replace my job? Do I need to be worried?

Suppose we approached AI as a new form of outsourcing. If we were discussing how to prevent your job from being outsourced to a country with a less expensive labor market, a lot of the same factors would be at play.

Having spent months programming with GitHub Copilot, weeks talking to ChatGPT, and days searching via Bing Chat as an alternative to Google, the best description I’ve heard of AI’s capabilities is “fluent bullshit.” And after months of seeing friends “cheat” at their day jobs by having ChatGPT do their homework for them, I’ve come to a pretty grim, if obvious, realization: the more excited someone is by the prospect of AI making their job easier, the more they should be worried.

Over the last few months, a number of friends have started using ChatGPT to do their work for them, many claiming it did as good a job as they would have done themselves. Examples include:

- Summarizing content for social media previews

- Authoring weekly newsletters

- E-mailing follow-ups to sales prospects and clients

- Submitting feature specifications for their team’s issue tracker

- Optimizing the performance of SQL queries and algorithms

- Completing employees’ performance reviews

Each time I’d hear something like this, I’d get jealous, open ChatGPT for myself, and feed it whatever problem I was working on. It never worked. Sometimes it’d give up and claim the thing I was trying to do was too obscure. Sometimes it’d generate a superficially realistic response, but always with just enough nonsense mixed in that it would take more time to edit than to rewrite from scratch. But most often, I’d end up wasting time stuck in this never-ending loop:

- Ask ChatGPT to do something

- It responds with an obviously-wrong answer

- Explain to ChatGPT why its response is wrong

- It politely apologizes (“You are correct, X in fact does not equal Y. I apologize.”) before immediately generating an equally-incorrect answer

- GOTO 3

I got so frustrated asking it to help me troubleshoot my VS Code task configuration that I recorded my screen and set it to a few lofi tracks before giving up.

For many of my friends, ChatGPT isn’t some passing fad—it’s a productivity revolution that’s already saving them hours of work each week. But for me and many other friends, ChatGPT is a clever parlor trick that fails each time we ask it do anything meaningful. What gives?

Three simple rules for keeping your job

I’ve spent the last few months puzzling over this. Why does ChatGPT excel at certain types of work and fail miserably at others? Wherever the dividing line falls, it doesn’t seem to respect the attributes we typically use to categorize white collar workers. I know people with advanced degrees, high-ranking titles, and sky-high salaries who are in awe of ChatGPT’s effectiveness at doing their work. But I can identify just as many roles that sit near the bottom of the org chart, don’t require special credentials, and don’t pay particularly well for which ChatGPT isn’t even remotely useful.

Here’s where I landed. If your primary value to your employer is derived from a work product that includes all of these ingredients, your job is probably safe:

- Novel: The subject matter is new or otherwise not well represented in the data that the AI was trained on

- Unpredictable: It would be hard to predict the solution’s format and structure based solely on a description of the problem

- Fragile: Minor errors and inaccuracies would dramatically reduce the work’s value without time-intensive remediation from an expert

To illustrate, each of the following professions have survived previous revolutions in information technology, but will find themselves under tremendous pressure from generative AI:

- A lawyer that drafts, edits, and red-lines contracts for their clients will be at risk because most legal agreements fall into one of a few dozen categories. For all but the most unusual contracts, any large corpus of training data will include countless examples of similar-enough agreements that a generated contract could incorporate those distinctions while retaining a high degree of confidence

- A travel agent that plans vacations by synthesizing a carefully-curated repertoire of little-known points of interest and their customers’ interests will be at risk because travel itineraries conform to a rigidly-consistent structure. With training, a stochastic AI could predictably fill in the blanks of a traveler’s agenda with “hidden” gems while avoiding recommending the same places to everyone

- An insurance broker responsible for translating known risks and potential liabilities into a prescribed set of coverages will themselves be at risk because most policy mistakes are relatively inconsequential. Insurance covers low-probability events that may not take place for years—if they occur at all—so there’s plenty of room for error for human and AI brokers alike (and plenty of boilerplate legalese to protect them)

This also explains why ChatGPT has proven worthless for every task I’ve thrown at it. As an experienced application developer, let’s consider whether that’s because my work meets the three criteria identified above:

- Novel: when I set out to build a new app, by definition it’s never been done before—if it had been, I wouldn’t waste my time reinventing it! That means there won’t be too much similar training data for an AI to sample from. Moreover, by preferring expressive, terse languages like Ruby and frameworks like Rails that promote DRY, there just isn’t all that much for GitHub Copilot to suggest to me (and when it does generate a large chunk of correct code, I interpret it as a smell that I’m needlessly reinventing a wheel)

- Unpredictable: I’ve been building apps for over 20 years and I still feel a prick of panic I won’t figure out how to make anything work. Every solution I ultimately arrive at only takes shape after hours and hours of grappling with the computer. Whether you call programming trial-and-error or dress it up as “emergent design,” the upshot is that the best engineers tend to be resigned to the fact that the architectural design of the solution to any problem is unknowable at the outset and can only be discovered through the act of solving

- Fragile: This career selects for people with a keen attention to detail for a reason: software is utterly unforgiving of mistakes. One errant character is enough to break a program millions of lines long. Subtle bugs can have costly consequences if deployed, like security breaches and data loss. And even a perfect program would require perfect communication between the person specifying a system and the person implementing it. While AI may one day create apps, the precision and accuracy required makes probabilistic language models poorly-suited for the task

This isn’t to say my job is free of drudgery that generative AI could take off

my hands (like summarizing the <meta name="description"> tag for this post),

but—unlike someone who makes SEO tweaks for a living—delegating ancillary,

time-consuming work actually makes me more valuable to my employer because it

frees up more time for stuff AI can’t do (yet).

So if you’re a programmer like me, you’re probably safe!

Job’s done. Post over.

Post not over: How can I save my job?

So what can someone do if their primary role doesn’t produce work that checks the three boxes of novelty, unpredictability, and fragility?

Here are a few ideas that probably won’t work:

-

Ask major tech companies to kindly put this genie back into the bottle

-

Lobby for humane policies to prepare for a world that doesn’t need every human’s labor

-

Embrace return-to-office mandates by doing stuff software can’t do, like stocking the snack cabinet and proactively offering to play foosball with your boss

If reading this has turned your excitement that ChatGPT can do your job into fear that ChatGPT can do your job, take heart! There are things you can do today to prepare.

Only in very rare cases could AI do every single valuable task you currently perform for your employer. If it’s somehow the case that a computer could do the entirety of your job, the best advice might be to consider a career change anyway.

Suppose we approached AI as a new form of outsourcing. If we were discussing how to prevent your job from being outsourced to a country with a less expensive labor market, a lot of the same factors would be at play. As a result, if you were my friend (just kidding! You are my friend, I swear!) and you were worried about AI taking your job, here’s what I’d recommend you do:

- Identify your contributions that have business value (i.e. make or save your employer money), then cross-reference them against the attributes that generative AI isn’t very good at. The robots probably aren’t coming for you tomorrow: work with your manager to gradually steer your role to maximize the time spent on humanity-dependent work and reduce time spent on easily-outsourced tasks

- As we’ve learned from countless attempts to offshore software development overseas, communication is always the bottleneck. Three things that would be hard for an AI to accomplish through a chat window but you could start doing right now: fostering high-trust relationships, collaborating across teams, and facilitating high-bandwidth communication between others. By taking an interdisciplinary approach to your work, it will not only make it more difficult for someone to outsource you, it could have the unintended consequence of also leading to a more meaningful, enriching career

- The natural response to any threat is fight, flight, or freeze; but counter-intuitively one of the best ways to protect your job from AI is to become an enthusiastic early adopter of AI tools. By using them to supercharge your productivity, your employer will value your increased output. Additionally, your newfound skills will put you in a better position to lead your company’s future AI efforts (just ask the former editor-in-chief of CNET)

Like all the best things in life, this advice is simple but hard. The reason I wrote this post now (instead of waiting until GPT-9.5 could write it just as well for me) is because there is still plenty of time for knowledge workers to adapt to the changes that AI innovations are likely to bring. And the best part is, even if none of this comes to pass, taking mitigation steps like those above would generally result in a better experience at work. It’s a win-win!

In conclusion

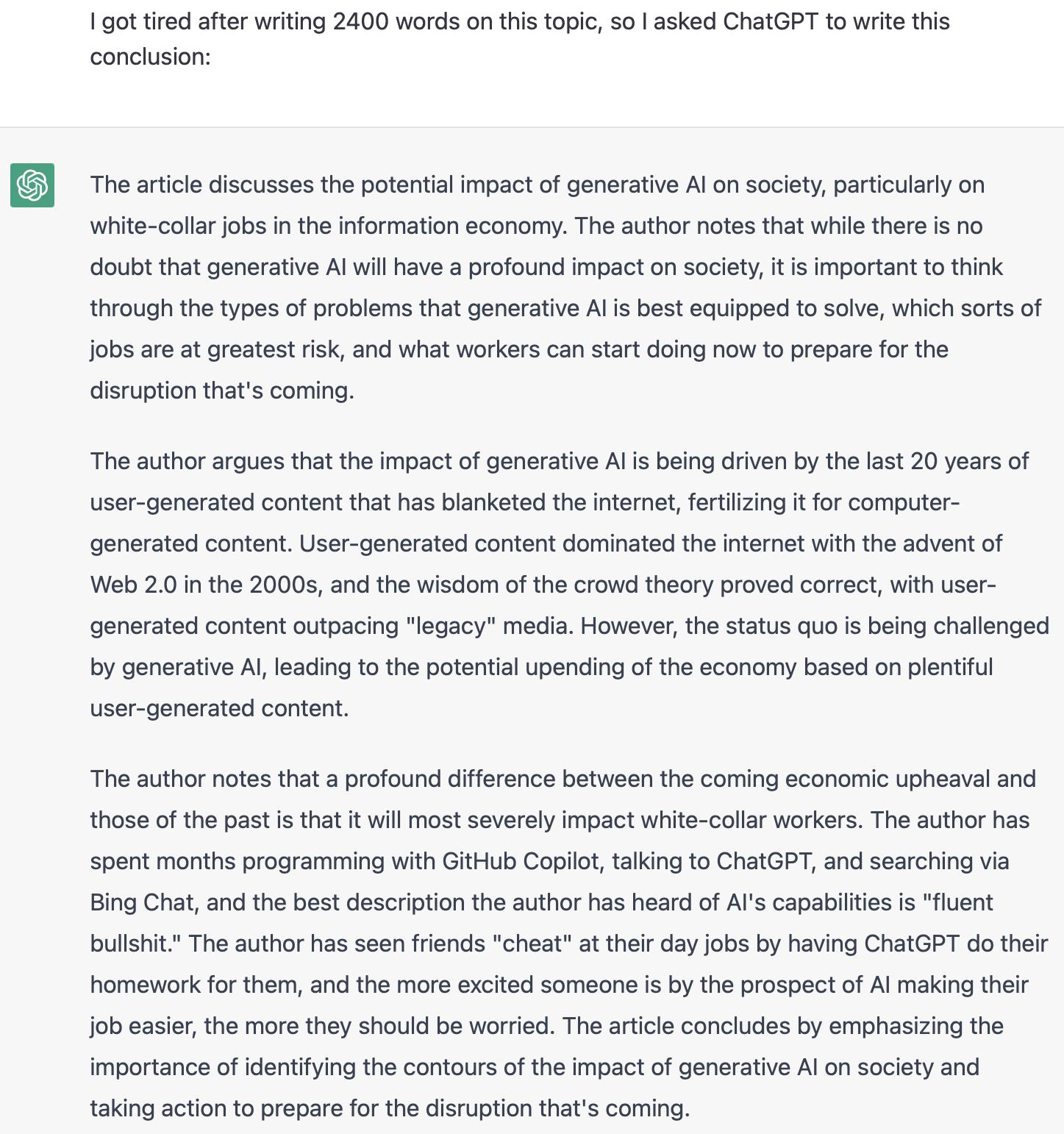

I got tired after writing 2400 words on this topic, so I asked ChatGPT to write a conclusion after feeding it this post.

Here’s what it spat out:

Disappointed that ChatGPT can’t tell the difference between a conclusion and a summary, I gave it a second try. The following screenshot is not modified; this was its actual response:

So, in conclusion: eat more olives. 🫒

Justin Searls

- Status

- Double Agent

- Code Name

- Agent 002

- Location

- Orlando, FL